AI Integration

CutReady’s AI features are powered by the agentive crate — a shared Rust library providing a pluggable LLM backend with streaming responses, function calling, and multiple agent configurations.

Provider Architecture

Section titled “Provider Architecture”The agentive crate defines a Provider trait that abstracts over different LLM services:

#[async_trait]trait LlmProvider: Send + Sync { async fn complete(&self, messages: &[Message]) -> Result<String>; async fn complete_structured<T: DeserializeOwned>( &self, messages: &[Message], schema: &JsonSchema ) -> Result<T>;}This trait enables swapping providers without changing the rest of the application. Currently supported:

| Provider | Auth Method | Notes |

|---|---|---|

| Microsoft Foundry | API key or Azure OAuth | Azure AI Foundry project endpoints |

| Azure OpenAI | API key or Azure OAuth | Standard Azure OpenAI resources |

| OpenAI | API key | Direct OpenAI API access |

| Anthropic | API key | Claude models |

Foundry / Azure OpenAI API

Section titled “Foundry / Azure OpenAI API”The Azure-family providers connect via the Foundry or Azure OpenAI API with:

- OAuth authentication — Azure AD token flow with Tenant ID and Client ID

- Streaming — Server-sent events for real-time response streaming

- Model selection — Configurable model name (e.g.,

gpt-4o)

API endpoint, credentials, and model are configured in Settings → AI Provider.

Function Calling (Tool Use)

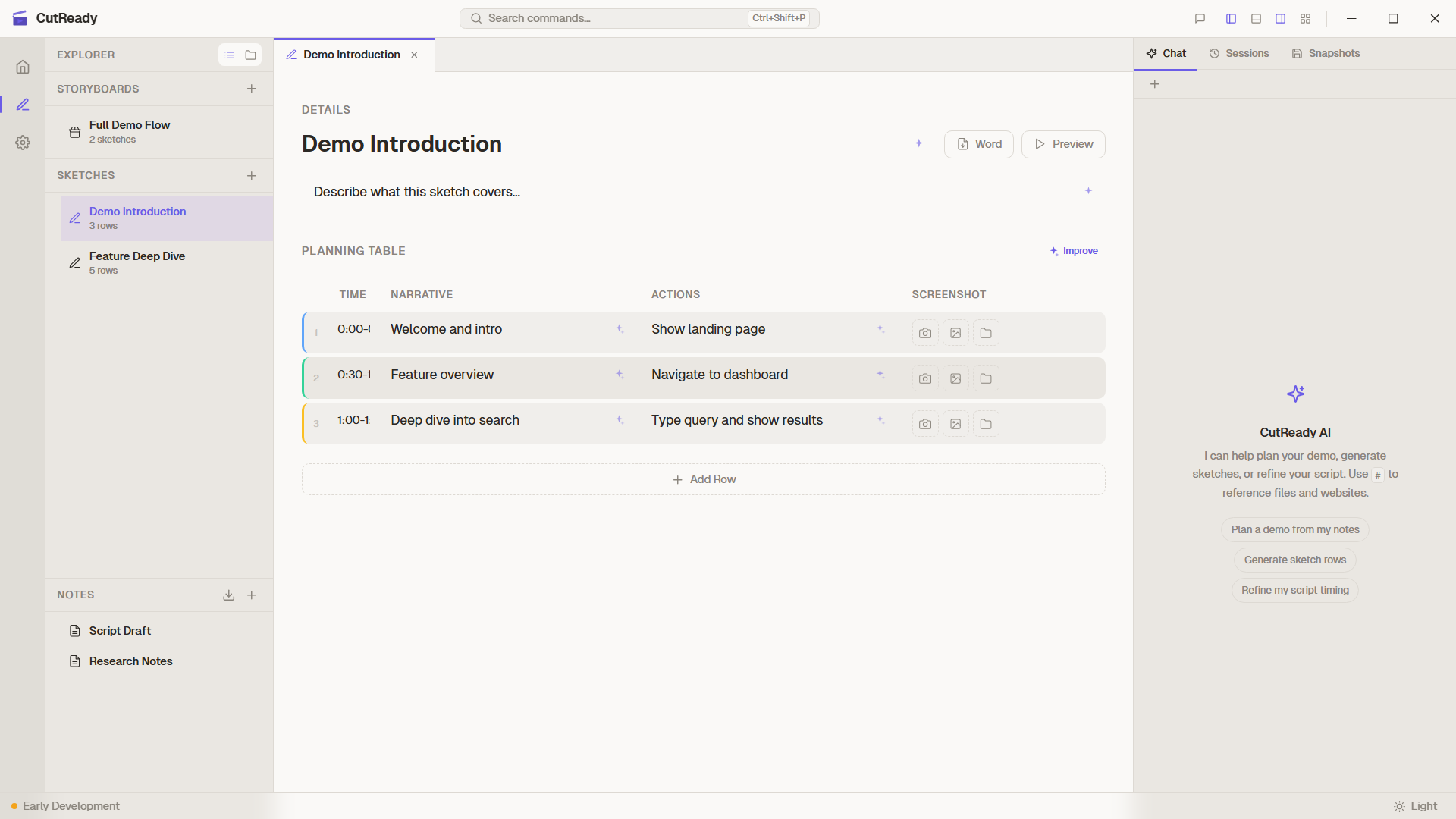

Section titled “Function Calling (Tool Use)”The AI assistant uses function calling to interact with the user’s project. Tool definitions are sent as part of the system prompt, and the model invokes them as needed during conversation.

Available Tools

Section titled “Available Tools”| Tool | Parameters | Purpose |

|---|---|---|

list_project_files | optional image flag | List sketches, notes, storyboards, and optionally screenshots |

read_note / write_note | note path, content | Read or write markdown notes |

read_sketch / write_sketch | sketch path, rows | Read a sketch or replace all planning rows |

update_planning_row | index, row data | Update a single planning row |

read_storyboard / write_storyboard | storyboard path, items | Read or update storyboard metadata and sequence |

design_plan | sketch path, row index, plan | Save the Designer agent’s plain-English visual brief |

set_row_visual | sketch path, row index, visual | Save or remove an Elucim DSL visual for a row |

delegate_to_agent | agent ID, message | Delegate a focused subtask to another agent |

fetch_url | URL | Fetch clean web page text plus a deduplicated links section |

search_web | query | Search public web results when Internet Search is enabled |

recall_memory / save_memory | memory query/content | Reuse workspace memory across sessions |

Tool Execution Flow

Section titled “Tool Execution Flow”- The model returns a

tool_callin its response - The Rust runner dispatches the tool call to the project tool executor

- The backend executes the operation (file read, write, web fetch, search, or visual save)

- The result is returned to the model as a tool response message

- The model continues generating its response with the tool result

The frontend receives streamed status, tool-call, tool-result, and delta events over Tauri channels so users can see what the agent is doing.

Model Capabilities

Section titled “Model Capabilities”When the provider exposes model metadata, CutReady records the model’s context length and capability tags such as vision and Responses API support. The model picker shows these tags, vision mode can warn when the selected model lacks image support, and conversation compaction uses the configured context window.

Prompt Design

Section titled “Prompt Design”System Prompts

Section titled “System Prompts”Each agent has a system prompt that defines its personality and behavior:

- Planner — Focused on demo structure, timing, and logical flow

- Writer — Focused on engaging narration and natural voiceover language

- Editor — Focused on precision, making minimal targeted changes

- Designer — Focused on generating Elucim DSL visuals for sketch rows

System prompts include the tool definitions (JSON schema) so the model knows what tools are available and how to invoke them.

Silent Mode

Section titled “Silent Mode”Sparkle button actions use silent mode (silent: true flag) on

sendChatPrompt(). In silent mode:

- The prompt is not displayed in the chat panel

- The response is not shown as a chat message

- Tool calls execute normally and update the sketch

- Actions are logged only in the Activity Panel

This keeps the chat history focused on intentional conversations.

Agent Configuration

Section titled “Agent Configuration”Built-in Agents

Section titled “Built-in Agents”The four built-in agents (Planner, Writer, Editor, Designer) ship with CutReady and have read-only system prompts that are tuned for their respective tasks.

Custom Agents

Section titled “Custom Agents”Users can define custom agents in Settings → Agents with:

- Name — Display name in the agent selector

- System prompt — Custom instructions that define the agent’s behavior

Custom agents use the same tool set and model as built-in agents, but with a different system prompt that shapes the AI’s approach.

Streaming Architecture

Section titled “Streaming Architecture”Responses are streamed from the LLM to the frontend via Tauri Channels:

- The frontend sends a chat message via a Tauri command

- The Rust backend opens a streaming connection to the LLM API

- Response chunks are forwarded through a Tauri Channel to the frontend

- The frontend renders tokens as they arrive for a responsive feel

- Tool calls are detected mid-stream and executed before continuing